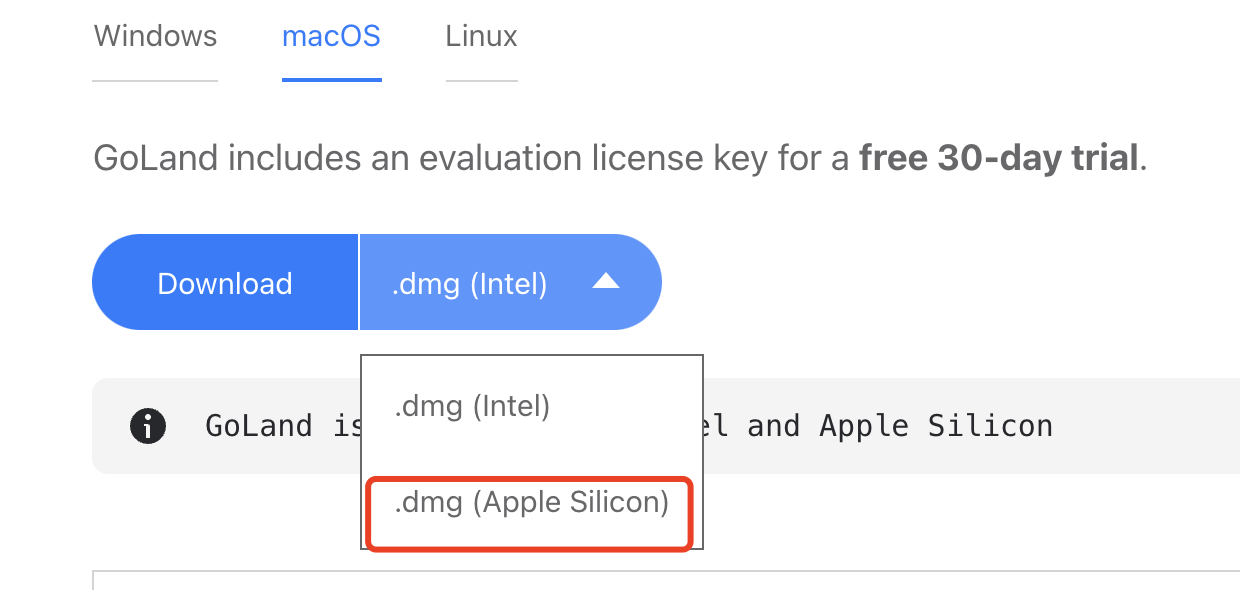

It had a glaring issue brought to light given the right circumstances. This particular service that caused the application to fail had been in production for many months without any reported issues of this kind which led to the false assumption that the service was doing great as it handles hundreds of thousands of messages a day. Multiple asynchronous calls like this shouldn’t be an issue.Īs pointed out in the discussion, this solution has no guarantees that the messages will be delivered in order and that may not be OK for your application. Since goroutines are cheap and the sockets are already established (via WebSocket).Members join a room without having to wait.No member needs to wait on another to get a broadcast message.Printf( "Broadcast: %v: %v", r.instance, err) Run Grunt task: select this option to run a Grunt task. Run File Watchers: select this option to have GoLand apply all the currently active File Watchers. Also, specify if you want the browser be launched with JavaScript debugger. Since we needed the lock in Broadcast in order for our members list to not change on us, the solution was to execute all the sends in parallel after getting what we needed from the lock: In the dialog that opens, select the type of the browser and provide the start URL. However, they cannot because they are waiting for a ( Broadcast) to finish because it has been slowed down by the high latency member.Members reload the application, with hopes that it will fix the problem, and try to rejoin ( Add).

As soon as they pulled an update ( Broadcast), all the members start noticing slow updates.A member suffering from high latency joins the room ( Add) with other members.

The results pointed to the Add function waiting on the Broadcast function to release its lock.Īll of a sudden the client reports made total sense (especially when we found out they were dealing with network sluggishness). The API mirrors the standard Go libraries making it an easy drop-in checker. The tool reports when a goroutine has had access to a mutex for 30 seconds or more 2. Thankfully, with the help of go-deadlock, a tool that tracks live mutex usage, we could see that this was occurring. Initial investigations pointed to something in the backing service that was getting hung up, but how did we find out where? We couldn’t obtain a lock ❶ because our Broadcast function was still using it to send out messages. We were locking the members list before we’d broadcast a message to the room to avoid any data races or possible crashes.

In one of our backing services for the application, each group has its own Room, so to speak. However, this time, our quick fixes were not working. Yet, it would generally clear up after a restart of a backing service or cleaning up some data. It appeared to only happen for one client at a time and we were all able to see the behavior when it happened. Every week or so, we would get a bug report from a client stating our web application was taking a long time to load, didn’t seem to load at all, or that actions were slow. I had just been looking for the problem in the wrong place. However, after some hours of debugging it made complete sense. My team would “do stuff” 1, and then the problem would go away only to come back a few days to a week later. Recently, I tackled a reoccurring issue whose cause wasn’t clear for weeks. GOLANG DEADLOCK Debugging a potential deadlock in Go with go-deadlock

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed